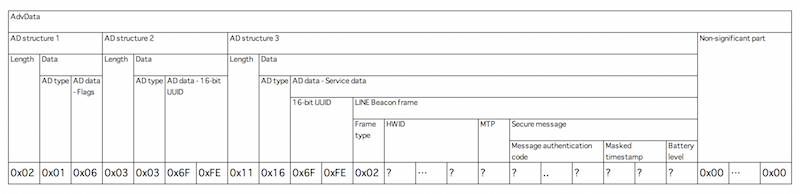

We have just one beacon that can advertise LINE. This post explains LINE advertising with information on the packet format.

LINE Beacons are used alongside the LINE messenger service, which enables users to exchange text, video, and voice messages on both smartphones and personal computers. This service is currently available in Japan, Taiwan, Thailand, and Indonesia. LINE offers developer APIs for both iOS and Android platforms, allowing developers to integrate LINE functionality into their own applications.

The LINE Beacon system works by sending webhook events to a LINE bot whenever a user with the LINE app comes into close range of a registered beacon. This enables developers to create context-aware interactions, tailoring the bot’s behaviour based on the user’s proximity to specific physical locations. In addition, there is a feature known as the beacon banner, which is accessible to corporate users. This allows a promotional banner to appear in the LINE messenger app when the user approaches a LINE Beacon, providing another layer of engagement for location-based services and marketing campaigns.

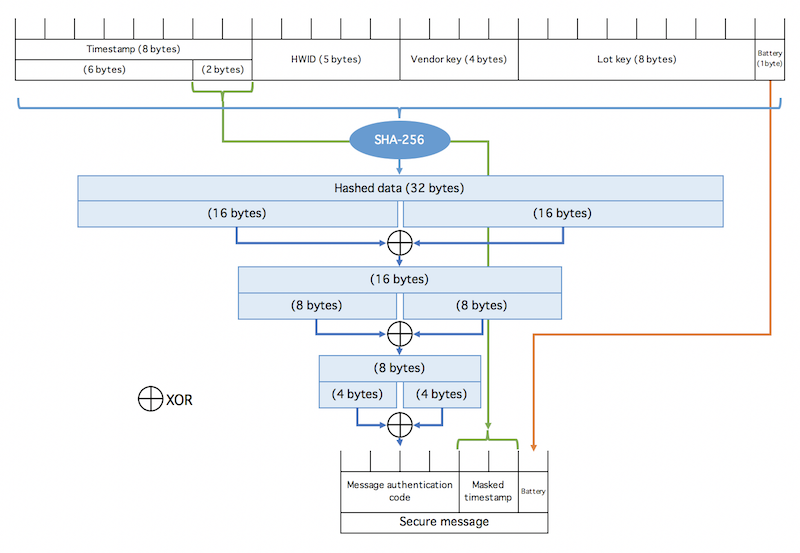

Unlike iBeacon, LINE Beacon packets have a secure message field to prevent packet tampering and replay attacks. The secure data is 7 bytes long containing a message authentication code, timestamp and battery level. Secure messages are sent to the LINE platform for verification.

LINE recommend LINE beacon packets be sent at a very high rate of every 152ms. In addition, LINE recommend advertising iBeacon (UUID D0D2CE24-9EFC-11E5-82C4-1C6A7A17EF38, Major 0x4C49, Mino 0x4E45) to notify iOS devices that the LINE Beacon device is nearby. This is because an iOS app can only see iBeacons when in background and LINE beacons can’t wake an app.

We observe that the high advertising rate and concurrent iBeacon advertising aren’t battery friendly and the beacon battery isn’t going to last long.

There’s more information on the LINE developer site on using beacons and the LINE packet format.