When choosing a beacon with an accelerometer, care needs to be taken that it supports the anticipated use. In some cases the accelerometer can control the functionality within the beacon while in other’s it provides raw data that can be used by other Bluetooth devices such as smartphones, gateways and single board computers such as the Raspberry Pi.

The most common use of an accelerometer is to provide for motion triggered broadcast. This is when the beacon only advertises when the beacon is moving so as to improve battery life and lessen the redundant processing needed by observing devices. Beacons supporting motion triggering include the M52-SA Plus, F1, K15, and the H1 Wristband.

A few beacons such as the iBS01G and iBS03G interpret the movement as starting, stopping and falling with a consequent change in Bluetooth advertising.

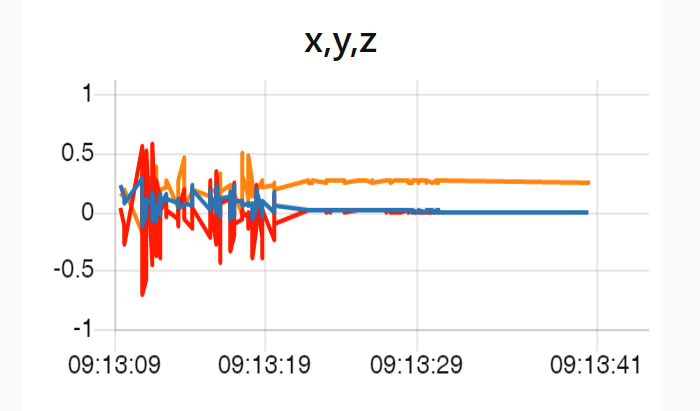

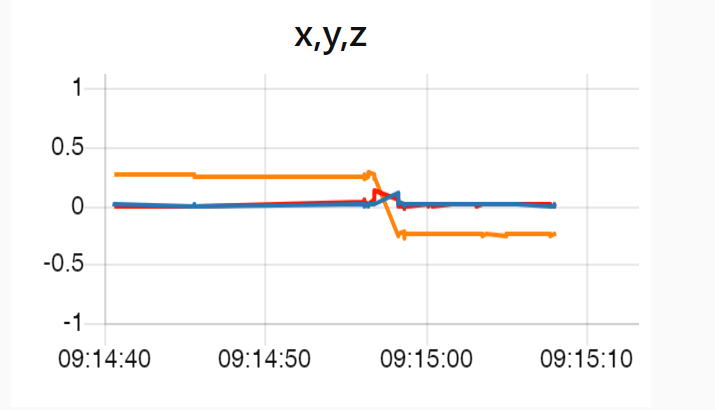

Raw acceleration data is provided by beacons such as the iBS01RG , iBS03RG, e8, K15 and B10.