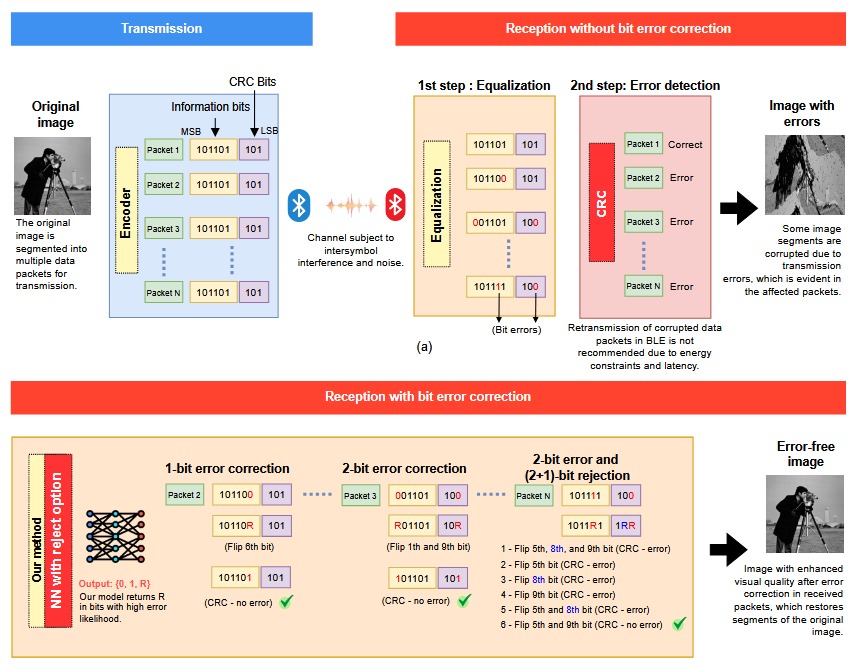

A new paper Error Correction in Bluetooth Low Energy via Neural Network with Reject Option by Almeida et al. (2025) presents a new method for improving data reliability in Bluetooth Low Energy (BLE) communication without modifying the transmitter. The technique combines cyclic redundancy check (CRC) error detection with a neural network that has a reject option, allowing it to identify and correct bit errors more effectively.

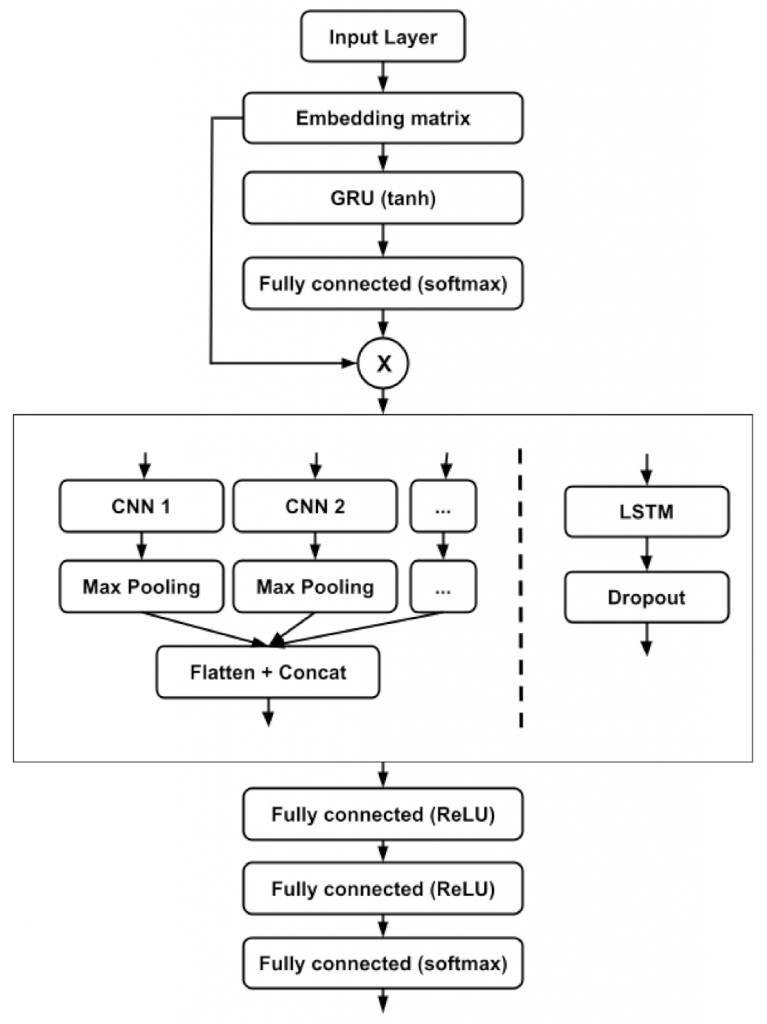

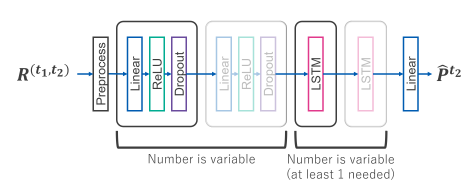

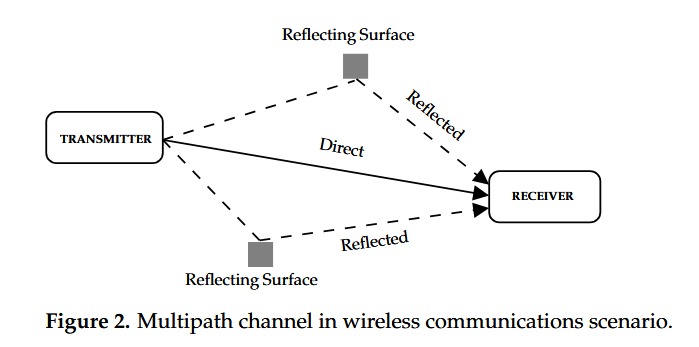

The study explains how BLE devices, particularly in Internet of Things (IoT) applications, suffer from data corruption due to multipath fading and interference. Traditional error-correcting codes, such as Turbo or LDPC, are unsuitable for BLE because of their computational and memory demands. Instead, the authors propose an Extreme Learning Machine (ELM) neural network that detects uncertain bits using a reject output (labelled R) and then flips them for CRC revalidation, iterating until the packet is corrected.

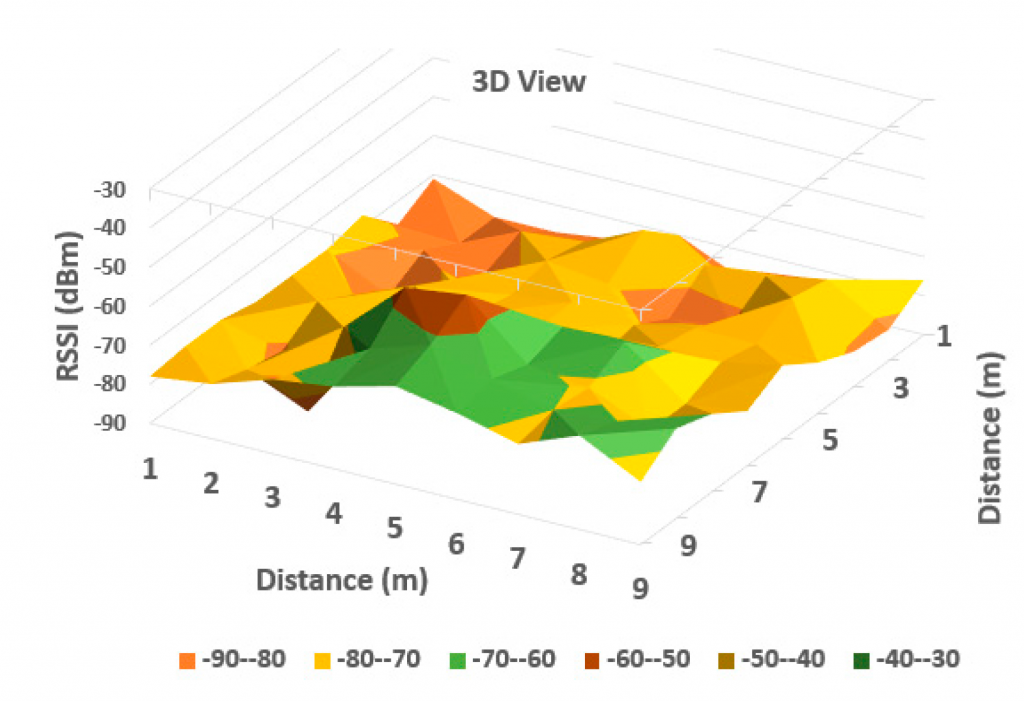

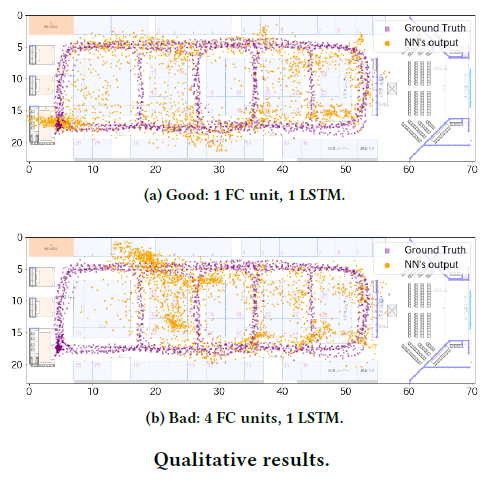

Simulations using Rayleigh fading and additive white Gaussian noise channels showed that the method achieved correction rates between 94–98% for single-bit errors and 54–68% for double-bit errors, depending on packet size. It significantly lowered packet error rates and improved throughput compared with uncorrected transmission.

When applied to compressed grayscale image transmission, the method restored visual quality under noisy conditions (signal-to-noise ratios of 9–11 dB). Measured using Structural Similarity Index (SSIM) and Peak Signal-to-Noise Ratio (PSNR), image quality improved markedly, often recovering most of the lost detail.

The approach outperformed other CRC-based correction algorithms such as CRC-ADMM and CRC-BP while requiring less computational power and memory. Processing times were substantially lower, enabling near real-time correction suitable for BLE and IoT devices.

The proposed neural network with reject option offers an efficient, scalable, and energy-aware method for enhancing BLE reliability without additional transmitter complexity. It reduces retransmissions, improves data integrity, and enhances performance in both data and multimedia transmission scenarios.